TL;DR — Bottom Line Up Front

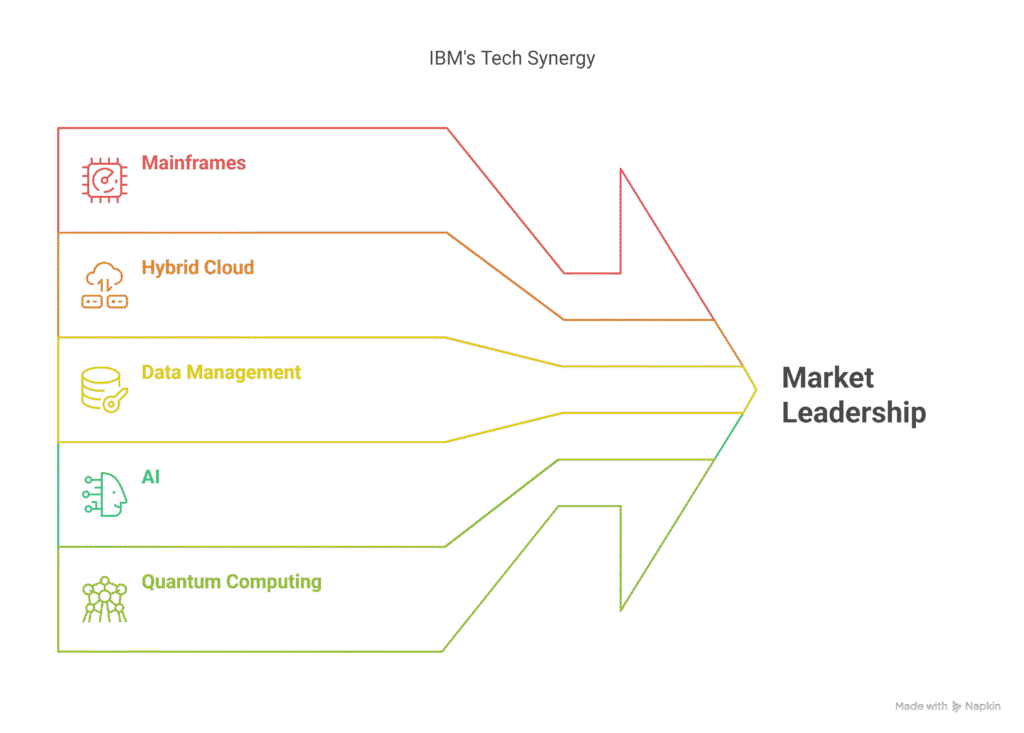

What this covers: How IBM uses its own analytics, BI, and infrastructure tools internally — from mainframes and hybrid cloud to Watson AI — and what enterprise data operations actually look like at scale.

Who it’s for: Analytics and BI professionals, operations managers exploring data careers, and anyone who wants to understand how a major enterprise runs its own data infrastructure before buying or building something similar.

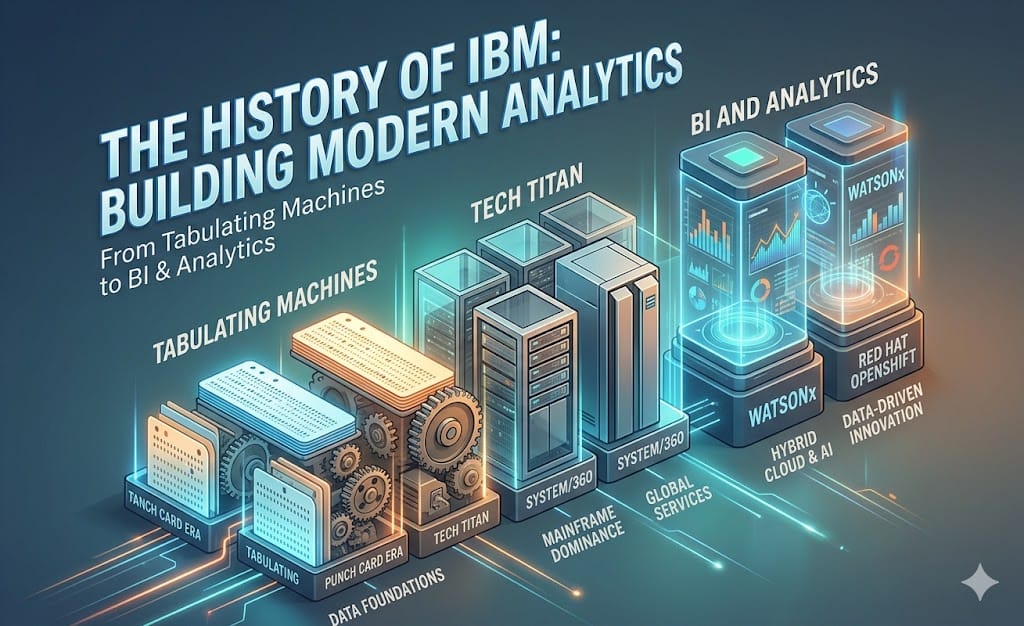

“IBM’s global strategy for business intelligence analytics is guided by a core philosophy: ‘We sell what we use, and we use what we sell.” This principle runs throughout IBM’s global operations, where the company’s own hardware, software, data management, and cloud solutions form the backbone of its internal tech stack. Here’s how those building blocks work together to power innovation, efficiency, and security for one of the world’s most influential technology companies.

IBM IT Infrastructure Foundation: Mainframes, Power Servers, and Hybrid Cloud

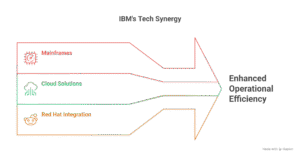

IBM hardware infrastructure is a blend of robust mainframes and scalable cloud solutions. A key example is IBM’s transition from legacy SAP to S/4HANA, enabled by its acquisition of Red Hat Enterprise Linux. This move allowed IBM to run SAP applications on Red Hat Enterprise Linux on Power servers within its own cloud. This modernized IBM’s internal ERP for over 150,000 users in 175 countries and reduced infrastructure costs by 30% through automation and server consolidation (IBM adopts ‘Rise with SAP’ for internal ERP cloud move).

IBM’s Generation Z mainframes are also central to its AI strategy. Many core enterprise data assets in industries like finance, manufacturing, healthcare, and retail rely on mainframes. IBM enables organizations to leverage these “crown jewels” as first-class citizens in the AI journey, ensuring that mainframes remain at the heart of digital transformation (AI on the mainframe? IBM may be onto something).

The IBM Analytics Platform: Software and Automation

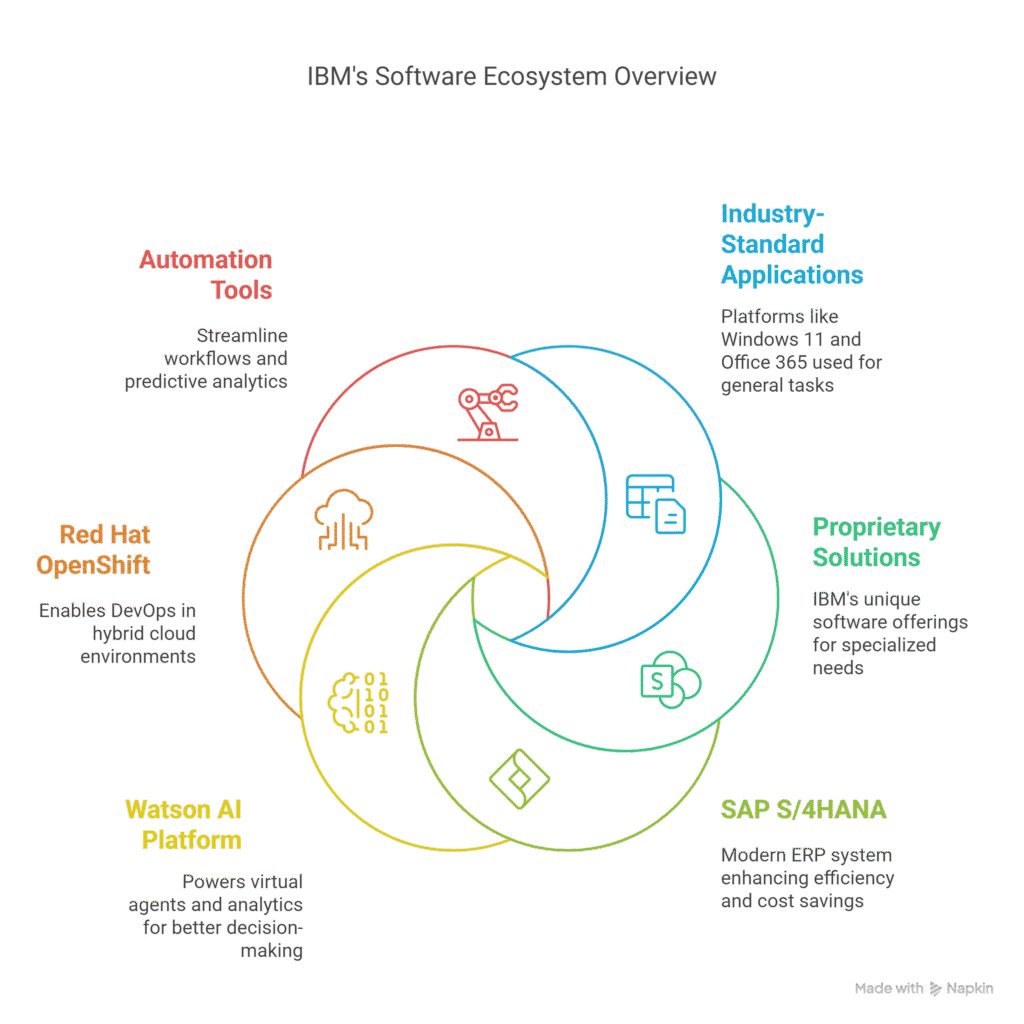

IBM’s software ecosystem is built on a mix of industry-standard applications and proprietary solutions. The company uses platforms like Windows 11, Office 365, Salesforce, SAP, GitHub, and Jira for specialized tasks. The adoption of SAP S/4HANA through the RISE with SAP program modernized IBM’s internal ERP, driving efficiency and cost savings.

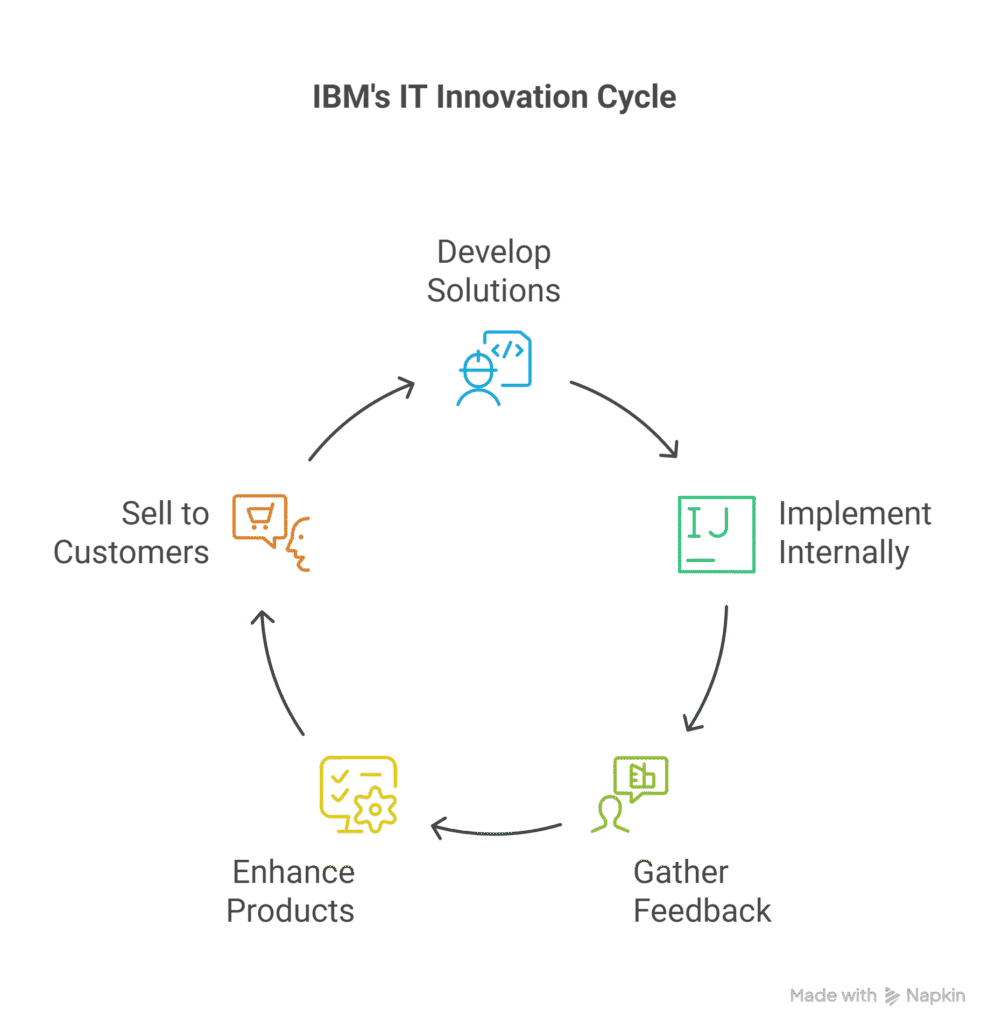

IBM’s Watson AI platform powers virtual agents, analytics, and industry-specific solutions, supporting automation and better decision-making (What can modern Watson do?). Developer tools like Red Hat OpenShift enable DevOps in the hybrid cloud, while automation tools streamline workflows and predictive analytics.

Data Management: Scaling Business Intelligence Analytics

IBM has been a pioneer in data management since the creation of SQL in the 1970s. Today, IBM uses Watson Knowledge Catalog to enforce data quality, scanning for issues at every level to ensure reliable business intelligence. Security is paramount, with IBM’s Z mainframes protecting critical financial and operational data. Running analytics and AI directly on mainframes allows IBM to maintain strict data governance and minimize risks associated with data transfer.

Network and Cloud Architecture: Secure, Scalable, and Flexible

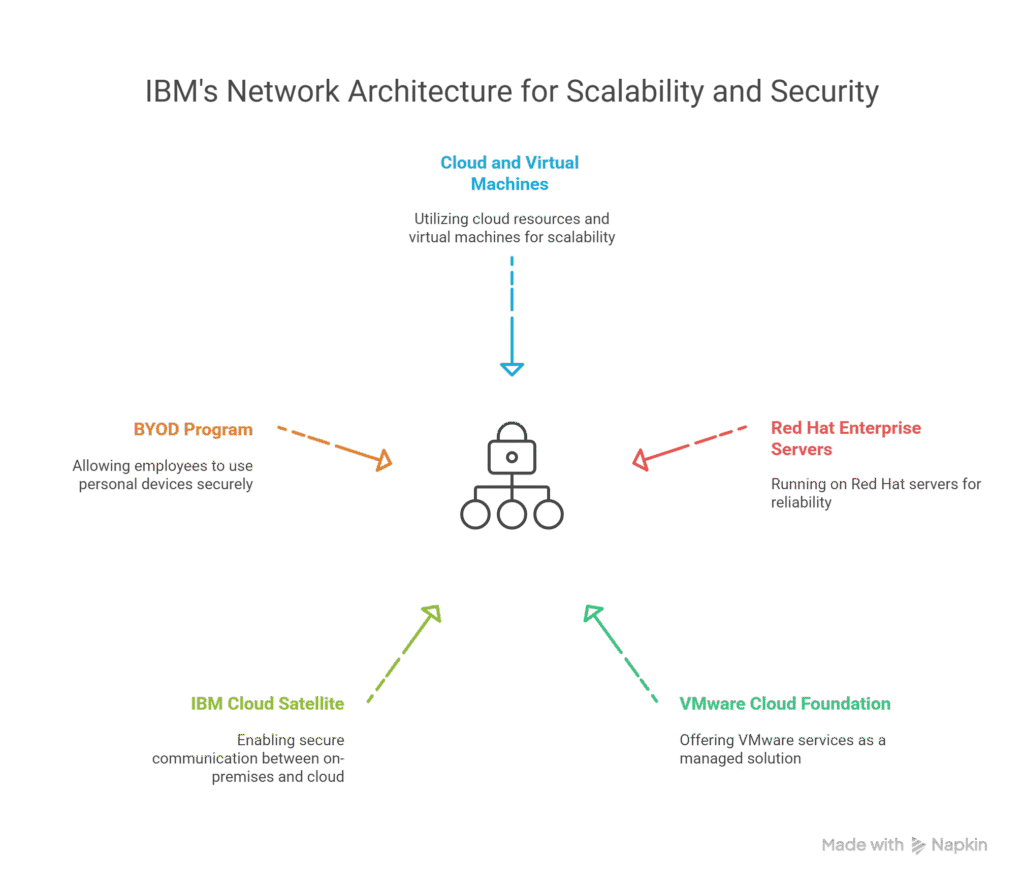

IBM IT infrastructure relies on cloud and virtual machines running on Red Hat Enterprise servers, providing scalability and reliability. IBM was the first to run VMware workloads in the cloud and now offers VMware Cloud Foundation as a managed service.

Secure communication between on-premises hardware and the cloud is enabled by IBM Cloud Satellite, which allows organizations to use IBM Cloud services like WatsonX while maintaining security. IBM’s BYOD (Bring Your Own Device) program, supported by strong security protocols, boosts productivity while reducing hardware costs (IBM CIO bets big on digital workplace to lure tech talent).

Operational Challenges and Evolution

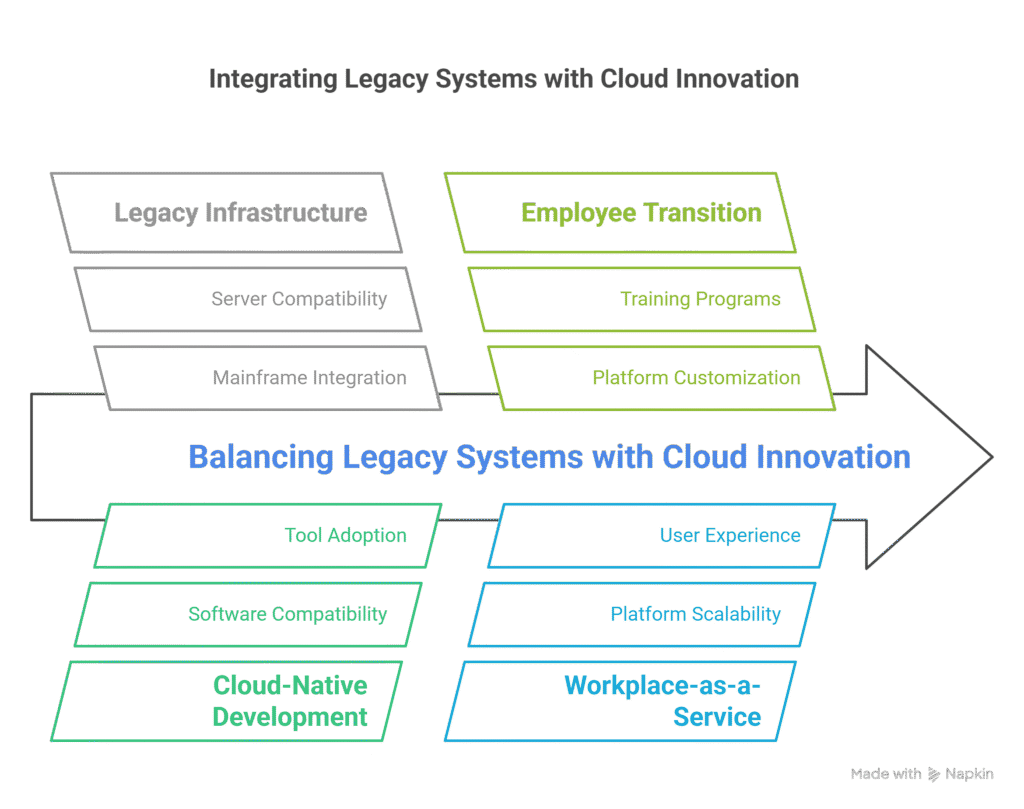

With a history spanning over a century, IBM faces the ongoing challenge of integrating legacy systems with modern cloud-native development. Balancing mainframe infrastructure with cloud innovation is a key focus. IBM’s workplace-as-a-service (WPAAS) initiative, which has transitioned 400,000 employees to a customizable platform, exemplifies this evolution.

Conclusion: IBM’s “Use What We Sell” Philosophy in Action

IBM’s IT infrastructure is ultimately a live product demo — every tool IBM sells runs on it internally. From mainframes and hybrid cloud to advanced data management and AI, IBM’s internal tech stack is a living demonstration of its market-leading solutions, supporting its leadership in areas like AI and quantum computing.

What Operations and BI Professionals Should Take From This

Most people read about IBM’s analytics infrastructure and think it only applies at enterprise scale. It doesn’t. The principles behind how IBM runs its data-driven operations translate directly to any environment where you’re trying to turn messy operational data into reliable decisions.

Treat data quality as infrastructure, not a cleanup task. IBM uses Watson Knowledge Catalog to enforce data quality before it reaches reporting; the same discipline applies whether you’re governing a WMS export or a 10-table data warehouse. Run analytics where the data lives rather than moving it. IBM keeps AI workloads on the mainframe to avoid governance gaps; the BI tools for operations professionals work the same way: build your analysis as close to the source system as you can, and acknowledge that most enterprise data doesn’t start in the cloud. IBM’s hybrid approach exists because legacy infrastructure is real. Your analytics infrastructure strategy has to work around that reality, not pretend it doesn’t exist.

The IBM analytics platform is worth studying not because you’ll run Watson, but because the problems IBM solved at scale, quality, governance, hybrid infrastructure, and analytics accessibility are the same problems showing up in every DC and operations environment, trying to get serious about data-driven operations.

Frequently Asked Questions

What does “We sell what we use” mean at IBM?

IBM runs its global operations on its own products. When IBM sells Watson or IBM Cloud, it is not pitching theory. It is selling what it actually depends on internally to run payroll, supply chain, and enterprise data for 150,000+ users across 175 countries.

How does IBM use AI internally for analytics?

IBM uses Watson AI for virtual agents, predictive analytics, and automation. Watson Knowledge Catalog enforces data quality internally by scanning for issues at the field level before they reach downstream reporting. IBM also runs AI workloads directly on mainframes to keep sensitive data under strict governance.

Are IBM mainframes still relevant for modern analytics?

Yes. IBM Z mainframes handle core enterprise data assets in finance, healthcare, and manufacturing. Running analytics directly on the mainframe eliminates data movement risk and supports real-time processing at scale. IBM’s position is that moving data to a separate environment introduces latency and governance gaps.

What is IBM Cloud Satellite and how does it fit the analytics stack?

IBM Cloud Satellite allows organizations to run IBM Cloud services — including WatsonX and Cloud Pak for Data — on on-premises infrastructure. Internally, IBM uses Satellite to bridge its mainframe infrastructure with cloud-native AI and analytics services.

What can analytics professionals learn from how IBM manages data?

Three principles stand out. IBM treats data quality as infrastructure, not an afterthought. It runs analytics where the data lives rather than moving it. And its hybrid cloud approach acknowledges that most enterprise data does not start in the cloud, so you must build the analytics layer around that reality.

Further Reading

Related posts on S2 BI Analytics: